A skill you cannot measure against no skill is just a prompt you felt good about. The whole value of a skill is the delta it buys you over a bare model, and that delta only shows up when you run both sides of the comparison.

The Problem With How Most People Ship Skills

Writing a skill is cheap now. Claude Code, the Agent SDK, and every internal harness I touch make it trivial to drop a SKILL.md in a folder and claim I have taught the agent something new. The problem is that almost nobody measures whether the skill actually helped. They try it once on a friendly prompt, see a good output, and ship it.

That is the worst possible feedback loop. The model was probably going to handle the friendly prompt on its own. The skill might be adding nothing. Worse, it might be burning tokens, slowing the run, and nudging the agent toward a narrower solution than it would have picked for free. Without a baseline, I cannot tell the difference between a skill that is earning its keep and a skill that is quietly making everything worse.

The fix is not complicated. It is eval driven iteration, the same loop I run on prompts, models, and retrieval systems. I owe the concrete shape of the loop below to Anthropic’s Evaluating skill output quality guide, which is the cleanest writeup I have seen. The opinions are mine.

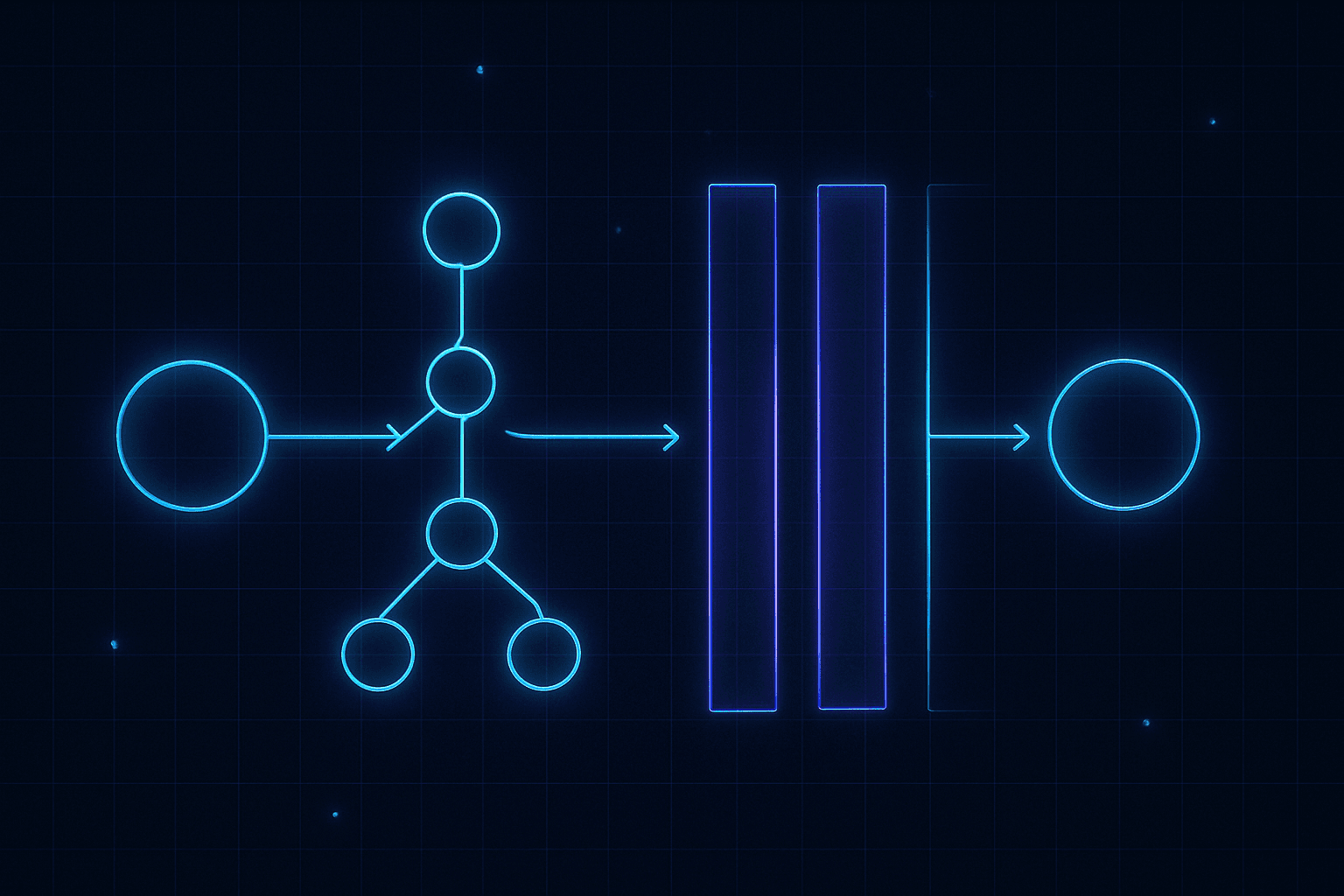

The Core Move: Always Compare Against No Skill

If I remember one thing from the whole topic, it is this. Every test case runs twice. Once with the skill loaded, once without. The without run is my baseline. The delta between the two is the only honest measurement of whether the skill is pulling its weight.

This sounds obvious and I still catch myself skipping it. It is tempting to look at a nice with-skill output and call it done. The discipline is to force myself to read the bare-model output too, because half the time the bare model produced something just as good.

When I am iterating on an existing skill instead of writing a new one, the same rule applies. I snapshot the previous version and treat it as the baseline. The delta I care about is now improvement over my last release, not improvement over nothing.

What a Test Case Actually Contains

A test case has three parts, and they are all about realism.

- A prompt that sounds like a real user request, not a cleaned up spec. Real users mention filenames, paste half an error message, and forget to say what format they want the answer in.

- A plain English description of what success looks like. No pass or fail checks yet. Those come after I have seen what the model actually produces.

- Any input files the skill needs to touch, checked into the skill directory.

Here is the shape I use for a skill I am building around video transcript summarisation.

# evals/evals.py

EVALS = [

{

"id": "transcript-long-interview",

"prompt": "here's a 90 min interview transcript in data/interview.vtt, "

"can you pull the 5 strongest quotes and say why each one matters?",

"expected_output": "Five quoted passages with speaker attribution and a "

"one-sentence rationale per quote.",

"files": ["evals/files/interview.vtt"],

},

{

"id": "transcript-noisy-autocaptions",

"prompt": "transcript is rough, lots of [inaudible] and filler. "

"give me a clean summary focused on decisions and owners.",

"expected_output": "Decision-focused summary that ignores filler "

"and flags anything unclear rather than inventing it.",

"files": ["evals/files/meeting-autocap.vtt"],

},

]

I start with three cases, not thirty. The goal of the first round is to see how the skill behaves, not to build a leaderboard. I will double the set in iteration two, once I know where the interesting failures live.

One of those three cases must be an edge case. A malformed file, a vague request, a prompt that pushes on the exact ambiguity I suspect is lurking in my SKILL.md. The boring cases teach me nothing.

Isolate Every Run

The rule I break most often is context hygiene. Every eval run must start from a clean slate. No leftover memory from the last attempt, no half remembered instructions from the session where I wrote the skill. If the run inherits context, it inherits bias, and the measurement stops meaning anything.

In Claude Code I get this for free by spawning each run as a subagent. In the Agent SDK I spin up a fresh session per run. In a plain script I launch a new process per case. Whatever it takes, the agent in the run has only two inputs: the skill (or not) and the prompt.

Here is the shape of the runner I use on top of the Agent SDK. Each case runs twice into its own clean workspace, timing and outputs land on disk, and nothing is shared between runs.

# evals/run.py

import asyncio, json, shutil, time

from pathlib import Path

from claude_agent_sdk import ClaudeSDKClient, ClaudeAgentOptions

from evals import EVALS

SKILL_DIR = Path("skills/transcript-summariser")

WORKSPACE = Path("workspace/iteration-1")

async def run_case(case: dict, with_skill: bool) -> None:

variant = "with_skill" if with_skill else "without_skill"

out_dir = WORKSPACE / case["id"] / variant

out_dir.mkdir(parents=True, exist_ok=True)

for src in case.get("files", []):

shutil.copy(src, out_dir / Path(src).name)

options = ClaudeAgentOptions(

cwd=str(out_dir),

allowed_tools=["Read", "Write", "Bash"],

permission_mode="bypassPermissions",

system_prompt=(SKILL_DIR / "SKILL.md").read_text() if with_skill else None,

)

start = time.monotonic()

tokens = 0

async with ClaudeSDKClient(options=options) as client:

await client.query(case["prompt"])

async for message in client.receive_response():

usage = getattr(message, "usage", None)

if usage:

tokens += usage.input_tokens + usage.output_tokens

(out_dir / "timing.json").write_text(json.dumps({

"total_tokens": tokens,

"duration_ms": int((time.monotonic() - start) * 1000),

}, indent=2))

async def main() -> None:

for case in EVALS:

await run_case(case, with_skill=False)

await run_case(case, with_skill=True)

if __name__ == "__main__":

asyncio.run(main())

The important line is system_prompt=... being set to the skill body or to None. That single toggle is the entire difference between the with-skill and without-skill arms of the experiment.

Assertions Come Second

The mistake I used to make was writing assertions before running the skill. I would guess what the output ought to look like, bake those guesses into pass or fail checks, and then wonder why my scores were useless. Half the assertions were wrong, and the other half were hitting things the model would always do regardless.

Now I run the first round with just the prompt and the expected output, read the real outputs, and then write the assertions. An assertion earns its place if it is concrete, verifiable, and would actually flip to fail on a bad output.

Good:

- The output file parses as valid JSON.

- The chart has labeled axes and exactly three bars.

- The summary attributes every quote to a named speaker.

Bad:

- The output is good.

- The summary uses the exact phrase “key decisions.”

Some qualities refuse to decompose into pass or fail. Writing style, whether the tone matches the user, whether the chart is pleasant to look at. I do not force those into assertions. I save them for the human review step.

Once I have assertions, they hang off the test case. I keep them in the same module so the whole case is one object.

# evals/evals.py

EVALS = [

{

"id": "transcript-long-interview",

"prompt": "here's a 90 min interview transcript in data/interview.vtt, "

"can you pull the 5 strongest quotes and say why each one matters?",

"expected_output": "Five quoted passages with speaker attribution and a "

"one-sentence rationale per quote.",

"files": ["evals/files/interview.vtt"],

"assertions": [

{"id": "five_quotes", "kind": "llm",

"text": "The output contains exactly five distinct quoted passages."},

{"id": "speaker_attribution", "kind": "llm",

"text": "Every quote is attributed to a named speaker from the transcript."},

{"id": "rationale_per_quote", "kind": "llm",

"text": "Each quote is followed by at least one sentence explaining why it matters."},

{"id": "outputs_markdown", "kind": "script",

"check": "has_file", "args": {"suffix": ".md"}},

],

},

]

The kind field is what matters. llm assertions go to a judge. script assertions go to a Python function. Mixing them in one list means I can grade the whole case in a single pass.

Grading With Evidence, Not Vibes

Grading is where LLM judges earn their keep. I hand the judge the assertion, the output, and ask it to produce a pass or fail plus a short piece of evidence quoting or pointing at the output. Assertions that can be checked mechanically, like “file parses as JSON” or “exactly five rows,” go to a Python script instead. Scripts do not hallucinate.

Two discipline points matter here.

First, do not give the benefit of the doubt. If an assertion says “includes a rationale per quote” and the output has a section with the label but no real reasoning, that is a fail. The label is not the content.

Second, grade the assertion itself while grading the output. If an assertion is always passing in both with-skill and without-skill runs, it is dead weight and I kill it for the next iteration. If it is always failing in both, either the assertion is broken or the task is impossible and I need to fix whichever one it is.

Mechanical checks are tiny and boring. They are also the ones I trust most.

# evals/checks.py

import json

from pathlib import Path

def has_file(outputs: Path, suffix: str) -> tuple[bool, str]:

matches = list(outputs.glob(f"*{suffix}"))

if not matches:

return False, f"No file with suffix {suffix} in {outputs}"

return True, f"Found {matches[0].name}"

def json_is_valid(outputs: Path) -> tuple[bool, str]:

files = list(outputs.glob("*.json"))

if not files:

return False, "No JSON files produced"

for f in files:

try:

json.loads(f.read_text())

except json.JSONDecodeError as e:

return False, f"{f.name} failed to parse: {e}"

return True, f"Parsed {len(files)} JSON files"

SCRIPT_CHECKS = {"has_file": has_file, "json_is_valid": json_is_valid}

The LLM judge handles everything else. I use Sonnet as the judge model, cache the system prompt so every assertion in an iteration hits a warm cache, and force the judge into a JSON shape I can parse without a second pass.

# evals/judge.py

import json

from anthropic import Anthropic

client = Anthropic()

JUDGE_SYSTEM = """You grade one assertion against one model output.

Return ONLY a JSON object: {"passed": bool, "evidence": str}.

Rules:

- PASS only with concrete evidence. Quote the output directly.

- A label without substance is a FAIL. Do not give the benefit of the doubt.

- If the assertion is unverifiable from the output alone, return passed=false

and say so in evidence."""

def grade_llm(assertion: str, output_text: str) -> dict:

response = client.messages.create(

model="claude-sonnet-4-6",

max_tokens=512,

system=[

{

"type": "text",

"text": JUDGE_SYSTEM,

"cache_control": {"type": "ephemeral"},

}

],

messages=[

{

"role": "user",

"content": (

f"<assertion>{assertion}</assertion>\n"

f"<output>\n{output_text}\n</output>"

),

}

],

)

return json.loads(response.content[0].text)

The grader that stitches the two together is a few lines. It walks the assertions on a case, dispatches each one to the right backend, and writes a grading.json next to the outputs.

# evals/grade.py

import json

from pathlib import Path

from evals import EVALS

from evals.checks import SCRIPT_CHECKS

from evals.judge import grade_llm

def read_outputs(outputs: Path) -> str:

chunks = []

for path in sorted(outputs.rglob("*")):

if path.is_file() and path.suffix in {".md", ".txt", ".json", ".csv"}:

chunks.append(f"### {path.name}\n{path.read_text()[:4000]}")

return "\n\n".join(chunks)

def grade_case(case: dict, variant_dir: Path) -> None:

outputs = variant_dir / "outputs"

output_text = read_outputs(outputs)

results = []

for a in case["assertions"]:

if a["kind"] == "script":

passed, evidence = SCRIPT_CHECKS[a["check"]](outputs, **a.get("args", {}))

else:

verdict = grade_llm(a["text"], output_text)

passed, evidence = verdict["passed"], verdict["evidence"]

results.append({"id": a["id"], "passed": passed, "evidence": evidence})

summary = {

"passed": sum(r["passed"] for r in results),

"failed": sum(not r["passed"] for r in results),

"total": len(results),

"pass_rate": sum(r["passed"] for r in results) / len(results),

}

(variant_dir / "grading.json").write_text(

json.dumps({"results": results, "summary": summary}, indent=2)

)

if __name__ == "__main__":

for case in EVALS:

for variant in ("with_skill", "without_skill"):

grade_case(case, Path(f"workspace/iteration-1/{case['id']}/{variant}"))

Aggregate Into a Delta, Not a Score

Once every run is graded, I roll them up into a single benchmark per iteration. The fields I care about are pass rate, mean time per run, and mean tokens per run, computed separately for with-skill and without-skill, plus the delta between the two.

The delta is the whole story. A skill that lifts pass rate by fifty points while adding ten seconds and two thousand tokens is almost always worth it. A skill that lifts pass rate by three points while doubling token cost is not. I would rather delete that skill and let the bare model handle it.

The aggregation script is maybe twenty lines. It walks one iteration directory, pulls the grading and timing files, and prints the delta I actually look at.

# evals/aggregate.py

import json, statistics

from pathlib import Path

KEYS = ("pass_rate", "total_tokens", "duration_ms")

def collect(iteration_dir: Path) -> dict:

groups = {"with_skill": [], "without_skill": []}

for case_dir in sorted(p for p in iteration_dir.iterdir() if p.is_dir()):

for variant in groups:

grading = json.loads((case_dir / variant / "grading.json").read_text())

timing = json.loads((case_dir / variant / "timing.json").read_text())

groups[variant].append({

"pass_rate": grading["summary"]["pass_rate"],

"total_tokens": timing["total_tokens"],

"duration_ms": timing["duration_ms"],

})

def stats(rows, key):

vals = [r[key] for r in rows]

return {

"mean": round(statistics.mean(vals), 3),

"stddev": round(statistics.pstdev(vals), 3),

}

summary = {v: {k: stats(groups[v], k) for k in KEYS} for v in groups}

summary["delta"] = {

k: round(summary["with_skill"][k]["mean"] - summary["without_skill"][k]["mean"], 3)

for k in KEYS

}

return summary

if __name__ == "__main__":

out = collect(Path("workspace/iteration-1"))

print(json.dumps(out, indent=2))

A run against a three case set might print something like this. The first row I read is always delta.pass_rate. If it is small, nothing else matters.

{

"with_skill": { "pass_rate": { "mean": 0.833, "stddev": 0.094 } },

"without_skill": { "pass_rate": { "mean": 0.333, "stddev": 0.118 } },

"delta": { "pass_rate": 0.5, "total_tokens": 1700, "duration_ms": 13000 }

}

Patterns, Not Averages

Averages lie. After I compute the benchmark I pull on the individual cases and look for structure.

- Assertions that pass with the skill and fail without it are the skill’s actual value. I study those transcripts until I understand exactly which instruction or script did the work.

- Assertions that pass in both configurations are candidates for deletion.

- Assertions that are flaky, passing sometimes and failing others on the same prompt, point to either prompt ambiguity or instructions in the skill that leave too much room for interpretation.

- Runs that are three times slower than their peers get their transcripts read end to end. The bottleneck is almost always a single over-cautious instruction that sent the agent down a rabbit hole.

Humans Still Review

Assertions catch what I thought to check. A human reviewer catches everything else. For each test case I read the actual output alongside the grade and write a one-line note. If I have nothing to say, the note is empty and the case passed review. If I do have something to say, I make it specific enough to act on. “The chart months are in alphabetical order instead of chronological” is actionable. “Feels off” is not.

The Iteration Loop

After grading, review, and pattern analysis I have three signals: failed assertions, human notes, and execution transcripts. The most efficient way to turn them into skill improvements is to hand all three, along with the current SKILL.md, to a capable model and ask it to propose a revision. The model can spot patterns across runs that would take me an hour to find by hand.

The guidance I give the revising model is the same every time:

- Generalise from the feedback. The skill needs to handle prompts I did not test.

- Keep the skill lean. Fewer, sharper instructions beat a rulebook.

- Explain the why. “Do X because otherwise Y happens” follows more reliably than “always do X.”

- Bundle repeated work. If three runs all wrote the same helper script, that script belongs in the skill, not in the transcripts.

The revision prompt itself is a short template I reuse across projects.

# evals/revise.py

from pathlib import Path

from anthropic import Anthropic

client = Anthropic()

REVISION_SYSTEM = """You are revising a SKILL.md based on eval results.

Propose a minimal diff that addresses the failures without bloating the skill.

Prefer deleting instructions over adding them. Every new rule must cite the

failure it addresses."""

def build_prompt(skill_md: str, benchmark: str, failures: str, notes: str) -> str:

return (

f"<current_skill>\n{skill_md}\n</current_skill>\n"

f"<benchmark>\n{benchmark}\n</benchmark>\n"

f"<failed_assertions>\n{failures}\n</failed_assertions>\n"

f"<human_notes>\n{notes}\n</human_notes>\n"

"Return the revised SKILL.md in full, then a short changelog."

)

def revise(iteration_dir: Path, skill_path: Path) -> str:

response = client.messages.create(

model="claude-opus-4-6",

max_tokens=4096,

system=[

{"type": "text", "text": REVISION_SYSTEM,

"cache_control": {"type": "ephemeral"}},

],

messages=[{

"role": "user",

"content": build_prompt(

skill_md=skill_path.read_text(),

benchmark=(iteration_dir / "benchmark.json").read_text(),

failures=(iteration_dir / "failures.md").read_text(),

notes=(iteration_dir / "feedback.md").read_text(),

),

}],

)

return response.content[0].text

I read the diff, apply the bits I agree with, bump the iteration number, and rerun the whole loop. The point is not to trust the revision wholesale. The point is that the model has already done the tedious cross referencing of failures, notes, and transcripts so I can spend my attention on the judgment calls.

Then I apply the changes, rerun every test case into a new iteration-N/ folder, grade, aggregate, review, and repeat. I stop when the delta plateaus, the human notes come back empty, or I have hit the point where the skill is earning its keep and further tuning is not worth the evening.

Why This Matters

Skills are the smallest unit of durable compounding in an agent workflow. Every skill I ship that actually earns its delta makes every future run a little cheaper and a little better, forever. Every skill I ship on vibes is a hidden tax that I will pay on every future run until someone notices. The eval loop is the difference between those two outcomes, and it is the cheapest insurance I know.

Related

- Auto-Harness Synthesis

- The Session Audit Meta-Prompt

- Agent-Driven Development

- Evaluator-Optimizer and Evolutionary Search

Sources

- Anthropic, Evaluating skill output quality, agentskills.io

skill-creatorreference skill that automates much of the loop